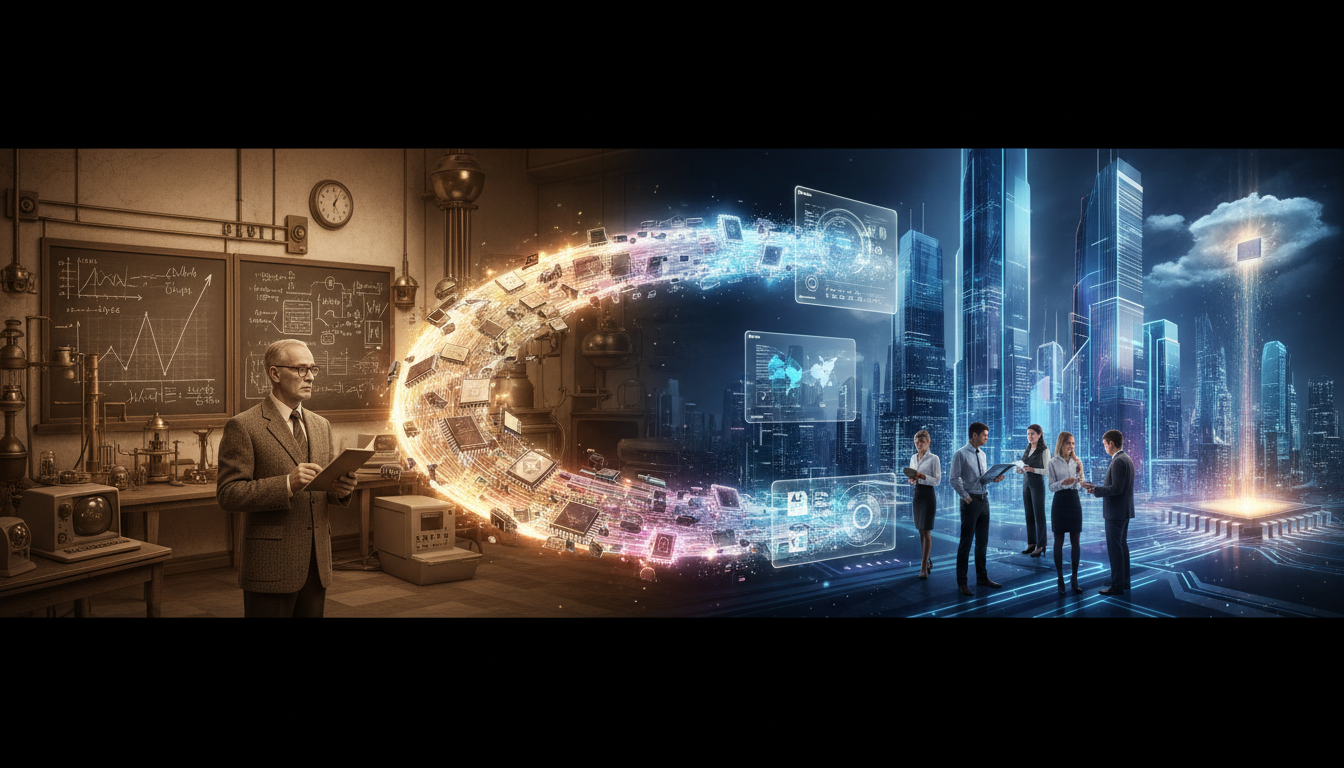

Moore's Law: From Observation to Guiding Principle

Have you ever wondered how a simple observation about chip density transformed the tech world? Moore's Law began as a short-term prediction but evolved into a roadmap that shaped decades of semiconductor investment and innovation.

Imagine sitting in the 1960s with Gordon Moore, co-founder of Intel, as he notes a trend—every two years, the number of transistors on a chip doubles.

What started as an observation about chip density seemed like a short-term insight, but it quickly morphed into something much larger.

This simple idea ignited a technological revolution, as companies began to see it as a target, a self-fulfilling prophecy that dictated how they would invest in research and development.

Suddenly, the race was on.

Semiconductor firms poured billions into innovation, eager to keep pace with this doubling phenomenon.

Over the decades, this urgency led to remarkable advancements, not just in performance, but in energy efficiency and cost-effectiveness.

But here’s the intriguing part: as the tech industry rallied around Moore's Law, did it drive creativity or stifle it?

Was it the roadmap to success, or did it create a narrow path that left little room for alternative innovations?

Even today, as we approach the physical limits of silicon, we're left with questions about the future of computing.

What comes next?

The quest for understanding continues, and the shadows of Moore's Law linger, inviting us to explore new horizons in technology.